In our previous post, Operationalizing Agentic Applications with Microsoft Fabric, we focused on a core challenge teams encounter once an agentic application moves beyond a proof of concept: operational reality. Specifically, how do you observe, govern, evaluate, and analyze what agents are doing once they interact with real users, data, and business processes at scale? That post introduced a production‑minded reference architecture, grounded in Microsoft Fabric, that treats agent activity as first‑class, governed data rather than opaque logs.

Since then, the solution accelerator behind that reference implementation has evolved. The updates are intentionally pragmatic rather than conceptual: fewer manual steps during deployment, clearer separation of agent responsibilities, and a more explicit use of Fabric’s native AI capabilities where they add real operational value. This post focuses on two of those changes:

- A script‑driven deployment model, using Microsoft Fabric REST APIs, that reduces manual configuration.

- The introduction of a Fabric Data Agent as an optional, read‑only agent

Together, these changes aim to make agentic applications not only observable, governed and optimized, but also easier to deploy consistently and safer to expose to broader audiences.

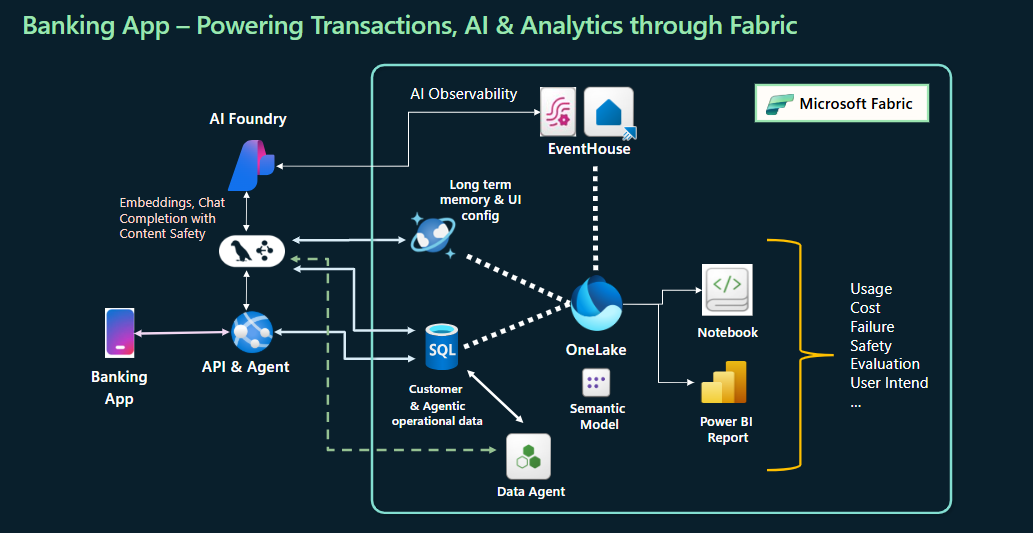

Figure: Agentic app’s evolved architecture.

Figure: Agentic app’s evolved architecture.

Scripted deployment with Fabric REST APIs

Microsoft Fabric exposes REST APIs for item and capacity management, allowing developers to automate common provisioning tasks such as creating Lakehouses, SQL databases, or other items within a workspace. In the updated agentic app solution, these APIs are used to perform “day‑zero” setup tasks that previously required some manual intervention.

From an operational perspective, the significance is not speed but repeatability. Scripted deployment enables:

- Consistent environment setup across dev, test, and production.

- Reduced configuration drift, especially in teams with multiple contributors.

- Clearer ownership, as infrastructure choices are encoded and version‑controlled.

This aligns with documented guidance for Fabric automation, which positions REST APIs to automate supported procedures.

Introducing a Data Agent as a complement, not a replacement

The second notable update is the introduction of a Fabric Data Agent as an optional, read-only agent within the application. You can enable this agent as part of the agentic team via setting an environment variable. A detailed guideline is also provided to help you configure Data Agent in an optimized manner. Note that this agent is deliberately scoped to answering questions over curated, structured data and does not perform transactions or trigger actions.

In the original design, task‑oriented agents handled both reasoning and data retrieval. While this works, it conflates two concerns: deciding what to do and answering what the data says. Over time, that blending can make safety and evaluation harder, particularly when users begin to ask exploratory or analytical questions.

Fabric Data Agents are explicitly designed to operate over governed Fabric data sources—such as Lakehouses, semantic models, and other supported Fabric data asset types—and to return answers using natural language. They do not support create, update, or delete operations; that constraint is intentional.

By introducing a Data Agent alongside task‑focused agents, the architecture makes a clearer distinction:

- Task agents’ reason, plan, and orchestrate actions.

- Data agents answer questions about existing data, grounded in governed models.

This separation simplifies both security review and mental models for users and operators.

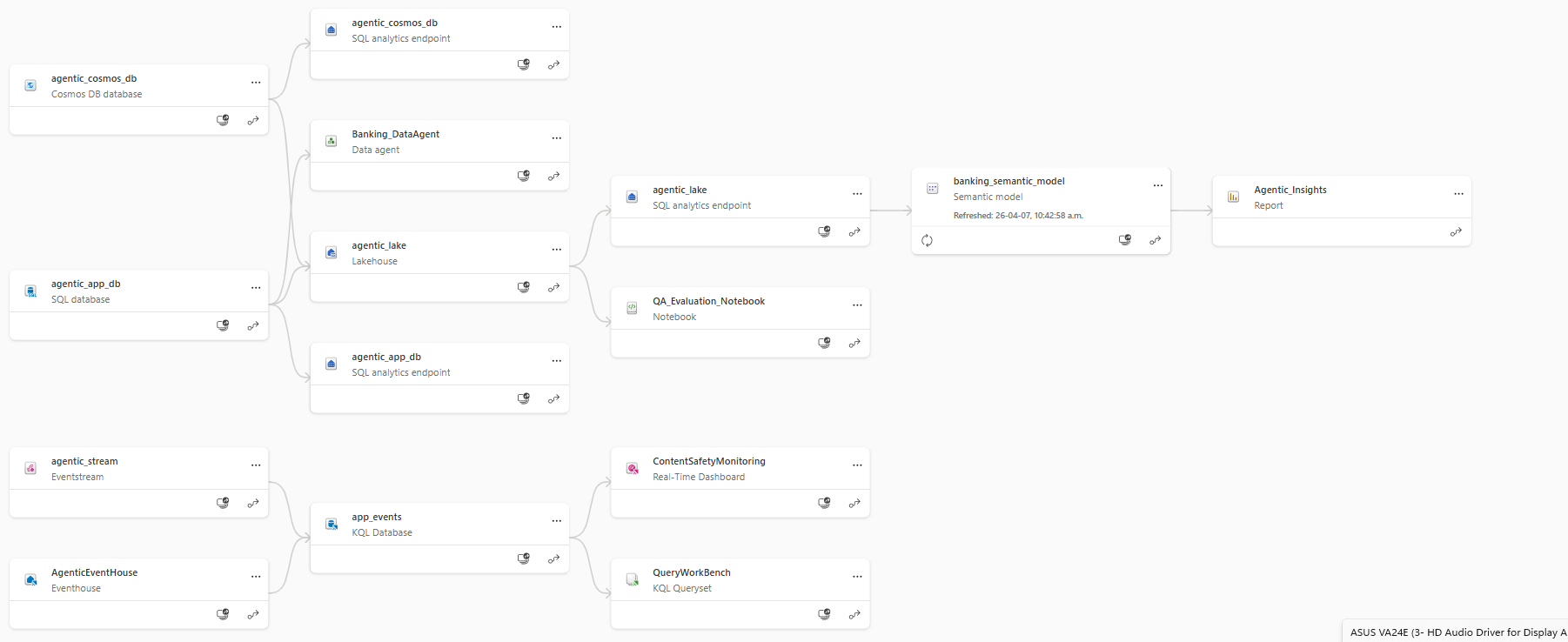

Figure: Fabric Components Lineage View.

Figure: Fabric Components Lineage View.

Why consider Fabric’s Data Agent for agentic apps?

From a customer perspective, the value of using Fabric’s Data Agent is not novelty, but alignment with existing data governance. Fabric Data Agents use the same underlying permissions, semantic models, and OneLake‑backed data foundation as the rest of the Fabric platform.

This has several practical implications:

Read‑only by Design

Fabric Data Agents do not support mutating operations. As documented, they answer questions using SQL, DAX, or KQL under the hood, but they cannot modify data. This makes them suitable for scenarios where users need transparency and insight without the risk of unintended side effects.

Grounded in Curated Models

Rather than exposing raw tables, Data Agents rely on whatever models and tables you explicitly configure. This encourages teams to think carefully about what data should be queryable in natural language, and to reuse the same semantic definitions already used for reporting and analytics.

Consistent Governance Across Analytics and AI

Because the Data Agent operates within Fabric, it inherits workspace‑level governance, access controls, and auditing. This reduces the need to build parallel security models just to support AI interactions.

Operational benefits without architectural overreach

Taken together, these updates reflect a broader design principle: operational maturity comes from narrowing responsibilities, not expanding them indiscriminately.

Scripted deployment ensures that infrastructure is predictable and reviewable. A dedicated Data Agent ensures that data access is controlled, explainable, and easier to evaluate independently from task execution. Neither change alters the core agentic concepts explored in the original post. Instead, they reduce friction and risk as systems scale.

Closing thoughts

Agentic applications are most compelling when their intelligence is matched by their operational discipline. The recent updates to the Fabric‑based reference implementation reflect lessons learned from early adopters, and we know what matters: automation, clarity of agent roles, and tight integration with governed data platforms.

These are not features to be marketed in isolation, but safeguards that make agentic systems usable and trustworthy in production. For customers already invested in Microsoft Fabric, they offer a way to extend existing governance and deployment practices into the agentic layer without inventing new ones unnecessarily.

We invite you to explore the repo at aka.ms/AgenticAppFabric and contribute your insights via opening an issue in the GitHub repo!

Related blog posts

Evolving Agentic Applications on Microsoft Fabric: From Automated Deployment to Integrating Data Agents

Agentic Fabric: How MCP is turning your data platform into an AI-native operating system

Something fundamental is changing in how developers interact with data platforms. Not a feature update, not a UI refresh, but a shift in the interface itself.

Answers to common questions about Fabric Data Factory

As the Data Integration Customer Advisory Team (CAT) lead, I spent a lot of time talking to customers at the recent FabCon/SQLCon about Fabric Data Factory, and I came away with a clear picture of what’s on customers’ minds when it comes to the future of data integration. Many of the same questions came up … Continue reading “Answers to common questions about Fabric Data Factory”

Microsoft Fabric

Accelerate your data potential with a unified analytics solution that connects it all. Microsoft Fabric enables you to manage your data in one place with a suite of analytics experiences that seamlessly work together, all hosted on a lake-centric SaaS solution for simplicity and to maintain a single source of truth.

Get the latest news from Microsoft Fabric Blog

This will prompt you to login with your Microsoft account to subscribe

Visit our product blogs

View articles by category

- Activator

- AI

- Announcements

- Apache Iceberg

- Apache Spark

- Community

- Community Challenge

- Data Engineering

- Data Factory

- Data Lake

- Data loss prevention

- Data Science

- Data Warehouse

- Databases

- Developer

- Fabric IQ

- Fabric ML

- Fabric platform

- Fabric Public APIs

- Fabric Workload

- Information protection

- Lakehouse

- Machine Learning

- Microsoft Fabric

- Monthly Update

- OneLake

- Power BI

- Power BI reports

- Real-Time Intelligence

- Roadmap

- Security and Compliance

- semantic model

- Uncategorized

View articles by date

- April 2026

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

What's new

- Microsoft 365

- Games

- Surface Pro 9

- Surface Laptop 5

- Surface Laptop Studio

- Surface Laptop Go 2

- Windows 11 apps

Microsoft Store

Education

- Microsoft in education

- Devices for education

- Microsoft Teams for Education

- Microsoft 365 Education

- Office Education

- Educator training and development

- Deals for students and parents

- Azure for students

Business

- Microsoft Cloud

- Microsoft Security

- Azure

- Dynamics 365

- Microsoft 365

- Microsoft Advertising

- Microsoft Industry

- Microsoft Teams

Developer & IT

- Developer Centre

- Documentation

- Microsoft Learn

- Microsoft Tech Community

- Azure Marketplace

- AppSource

- Microsoft Power Platform

- Visual Studio

Company

- © 2026 Microsoft